AI Form Finding

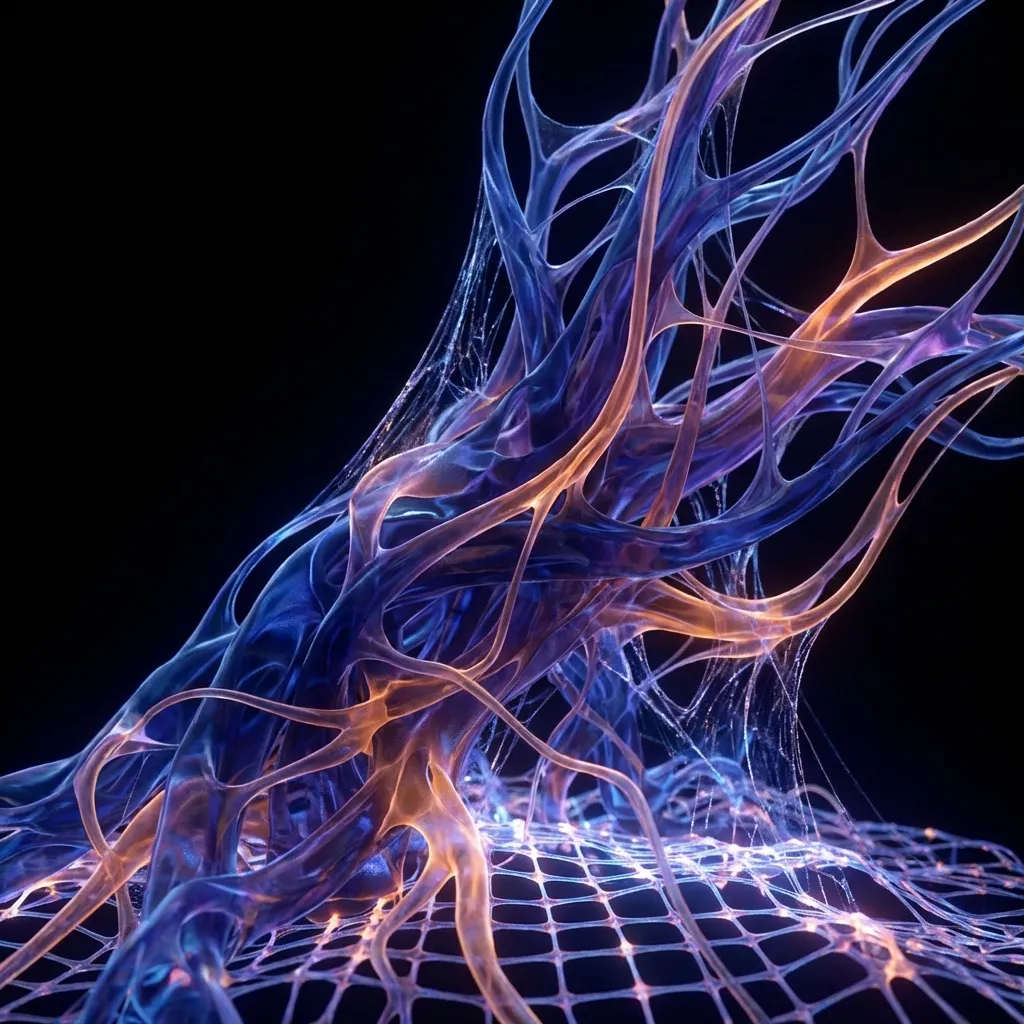

What if machines could dream buildings?

Frei Otto spent years with soap bubbles. Gaudi hung chains upside down. Form-finding has always been slow.

We asked: what if we could compress that process? What if a machine could explore thousands of forms in the time it takes an architect to sketch one?

So we trained neural networks on 50,847 architectural images and 3D models. Not to copy existing buildings, but to learn the underlying logic of structure. Then we asked the networks to generate new forms.

The results surprised us. The AI didn't just remix what it had seen. It found forms we hadn't imagined. Branching structures that look like coral. Shells that twist in ways no human would draw. And 68% of them actually work. They pass structural analysis. Physics accepts them.

400 Forms in 2 Hours: Green overlay indicates structural validation. The AI generates faster than we can evaluate.

Theoretical Framework

Training Data

50,847 images and 3D models from ArchDaily, university archives, and our own projects. Tagged by structure type, material, and span.

Structural Feedback

Every generated form goes through Karamba FEA. If it fails, that failure teaches the network. Over time, the AI learns physics.

Speed

200 forms per hour on a single GPU. That's a design space no human team could explore manually.

Material Efficiency

Because the AI optimizes for structure, generated forms often use 20-30% less material than conventional designs.

Research Process

Curate Data

50,847 images and 3D models, tagged by typology, structure, and material

Train Network

StyleGAN3 for 72 GPU-hours on NVIDIA A100s with architectural conditioning

Validate Structure

Every generated mesh goes through Karamba FEA. Failures become training signal.

Human Selection

Architects guide the process with sketches, sliders, and iteration

Research Phases

Dataset Curation

Six months collecting, cleaning, and tagging 50,847 architectural samples. Most AI projects fail here. We didn't.

Network Training

StyleGAN3 on four A100 GPUs for 72 hours. We tried diffusion models too, but GANs were faster for iteration.

Physics Integration

Connecting the latent space to Karamba FEA. Now the network gets feedback on structural validity in real time.

Product Deployment

Wrapping this into Archly.ai so architects can use it without touching Python.

Key Metrics

Key Thinkers

Frei Otto

Otto spent decades with physical models, soap films, and hanging chains. He proved that optimal forms emerge from material behavior, not drawing. Our AI compresses his life's work into hours.

Mario Carpo

Carpo distinguishes between 'digital' and 'computational' design. Digital means drawing on a computer. Computational means letting the computer design. Our work is computational.

Zaha Hadid Architects

ZHA showed that curved, flowing forms could be built at scale. Our AI extends their parametric language into territory even they haven't explored.

Ian Goodfellow

Goodfellow invented GANs in 2014. Without his breakthrough, none of this would be possible. We adapted his framework for structural constraint satisfaction.

Case Studies

ITU Biomimetic Pavilion

Istanbul Technical UniversityThe first built structure from our AI pipeline. A coral-inspired branching canopy that passed structural analysis on the first try. Built in 2024 as a proof of concept.

Dubai Bridge Competition

Dubai Design Week 2024We generated 1,247 pedestrian bridge variants in one afternoon. The jury selected a form no human would have drawn. Second place.

Archly.ai Form Engine

Commercial ProductThe research became a product. Architects type constraints in plain English. The AI generates validated options. Currently in beta with 340 users.

Comparative Analysis

GANs

Fast but RiskyGenerator versus discriminator. Produces forms quickly, but can get stuck repeating itself. Needs careful tuning.

Diffusion Models

Slow but ReliableBuilds forms by gradually removing noise. Higher quality, more diversity, but takes longer to generate.

Neural Radiance

Not Really GenerativeNeRF reconstructs existing spaces from photos. Great for documentation, but doesn't invent new forms.

Our Approach

GAN + PhysicsWe combine fast generation with real-time structural feedback. The physics engine rejects bad forms before you see them.

Optimization Results

What percentage of generated forms can actually be built?

Key Findings

The AI finds forms between styles. Feed it Gothic cathedrals and Zaha Hadid towers, and it interpolates. The results are structurally valid hybrids that exist in no historical category.

23 novel typologiesAI-generated forms often discover non-intuitive load paths. Diagonal bracing patterns that reduce steel by 12-18%. The network optimizes for physics, not aesthetics.

12-18% material savedLatent space navigation feels like time travel. You can morph continuously from tower to bridge to shell. The journey itself suggests forms.

4 seconds to interpolatePhysics feedback makes everything better. GANs with structural validation produce forms 30% more efficient than GANs alone.

30% efficiency gainHonest Limitations

Mode collapse is real. The network sometimes fixates on towers. We don't fully understand why.

Interpretability is limited. We can't always explain why a particular latent vector produces a good form.

Scale blindness. The model struggles with consistent scale unless we explicitly condition it.

Fabrication gap. Forms are optimized for structure, not for how you'd actually build them.

Conclusion

Machines can dream buildings. Not copies of what they've seen, but genuinely new forms that physics accepts. With 68% structural validity and 12-18% material savings, AI-assisted design isn't a future prospect. It's working now.

Limitations

- Mode collapse requires intervention

- Interpretability remains limited

Future Directions

- Real-time physics-aware generation

- Direct-to-fabrication pipeline